SUSE cluster log is a single file where you found messages of cluster engine and all of it components. For each and every operation in cluster plenty of info will be logged into log file. While cluster is in smooth operation no one care about it. What if you run into some issue? Linux OS cluster do execute number of process in a second. With simple resource monitoring command we cannot trace out the whole operation history.

Having known about various parts of messages being written in SUSE cluster log file will help much during troubleshooting. The message to be written in logs with error code is predefined already. You may also see output of some commands depends on type of resource.

Note: SUSE cluster also referred here as SUSE HA in someplace of this document. SUSE HA uses pacemaker as cluster engine. Hence this whitepaper applicable to any pacemaker based Linux cluster. Redhat, Ubuntu, Debian, CentOS and many other flavors adopted pacemaker cluster manager.

We take preconfigured SUSE Enterprise Linux high availability cluster to demonstrate here. This suits for any Linux Operating System flavour which uses PACEMAKER as a cluster manager like Redhat, Ubuntu.

SUSE cluster log file is /var/log/messages by default

- First three column of log file says date and time of occurrence.

- Fourth column is hostname. This column will be useful when you use remote syslog server.

- Fifth column says the daemon name which triggers log message with its PID.

- Sixth column is type of message. Options are notice/information, error or warning

- Rest are log messages with function name then predefined text followed by command output if any

SUSE Cluster log fifth column – daemon names

SUSE HA (pacemaker) cluster sends logs from few different daemons.

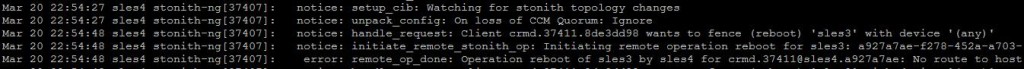

Stonith-ng

Stonith daemon is responsible for node fence. We can use different type of fencing methodology such as sbd, drac5, VMware etc. Logs you receive from this daemon when

- There is any change in stonith topology such as node joining.

- Stonith receives fence command

- Status output of fence command

View stonith logs alone using below command

#grep stonith-ng /var/log/messages

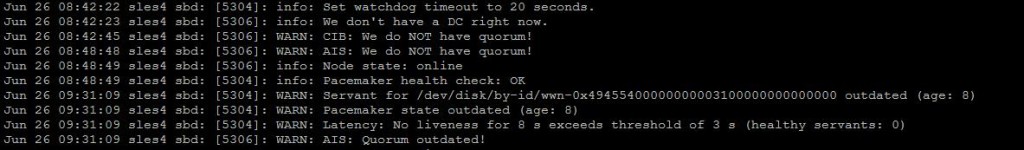

SBD

This is one type of fencing mechanism. If you configured different fencing device then you may notice different in name accordingly. Logs related to below will label as SBD.

- Loss of device

- Any configuration change in device

- Latency in accessing device

- Unavailability of AIS service

- Change in Quorum state

- Custom messages sent by root using command

#grep sbd: /var/log/messages

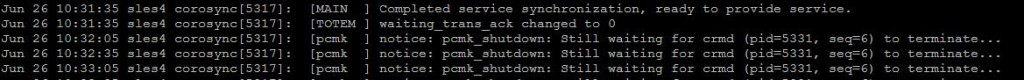

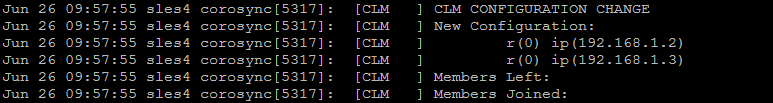

Corosync

Corosync plays major role in pacemaker cluster. It provides complete infrastructure functionality to cluster engine. Infrastructure function includes messaging, membership and heartbeat. Corosync interacts with many other daemons. In log respective daemon name recorded with message part. You may notice corosync logs when

- Any error or configuration change in heartbeat protocol named TOTEM.

- Info related to corosync daemon process with named MAIN.

- Cluster manager i.e. pacemaker/openais service level changes and debug messages in the name of SERV and PCMK

- Update or Change in membership with name of CLM

Still much more various logs collected under corosysnc such as SYNC, LCK, MSG, etc. Those are goes in depth which we see later.

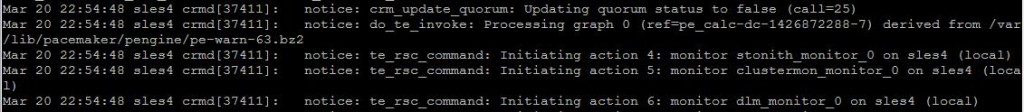

CRM Daemon (crmd)

“crmd” is the main cluster manager daemon. Many times useful information can be found in log file which is written by crmd. Logs related to

- Update to cib.conf

- Resource current status and change in status

- Resource operation like start, stop and monitor.

- Failure of any resource

- Stonith operation

The crmd daemon uses to do many operations at background hence it write number of messages frequently. In case of problematic scenario with crmd logs we can identify where cluster saw the black mark and what it does to overcome.

#grep “crmd\[“ /var/log/messages

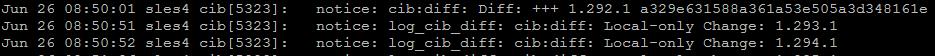

CIB

It is the cluster configuration file. The cib.conf is a single XML file contains all the configuration of cluster. Logs related to

- Quorum update

- Failure of cib checksum digest during service start

- CIB read/write errors

Policy Engine (pengine)

CRM takes action based on policy defined in policy engine. Logs from this daemon says

- Policy used by CRM. (example restart resource policy used when resource filed)

- Info about pengine graph file. It is a graphical representation of configured cluster resources with all of it relation and dependencies. New file will be generated whenever there is change in resource status. Appropriate logs placed here.

Log File location

By default all cluster logs uses system daemon log.

To collect corosync logs to specific location update /etc/corosync/corosync.conf as below

to_syslog: no

logfile: /var/log/cluster/corosync.log

Comments are closed.